The Anthropic-OpenAI-Pentagon saga explained

The Pentagon has labeled Anthropic as a supply-chain risk, but now OpenAI has the same requests

Failed to render LaTeX expression — no expression found

Wednesday, March 4th, 2026

Dear readers,

Welcome to my newsletter AI Law Brief, where I analyze the latest news about Big Tech scandals, AI Governance failures, and tech policy insights.

I am Dr. Chiara Gallese, a scholar working at the intersection between Law and Computer Science. I am a researcher at Tilburg University (Netherlands) and an Adjunct Professor of Digital Ethics at Ca’ Foscari University (Italy).

My work is not limited to academic insights: I actively participate in policy-making, e.g., through the participation in the drafting of the working groups for the drafting of the two EU AI Codes of Practice promoted by the EU Commission’s AI Office. In addition, I also have industry experience, having worked as a consultant for multinational corporations and major banks. You can read my full CV here: Full CV.

In my newsletter, I adopt several important principles:

a) I always base my analysis on facts and news that I collect from reliable sources and first-hand experience. I am very careful not to spread fake news to the best of my possibilities, even when I report some advance information from my own sources;

b) I base my analysis on my actual expertise and I never make claims that I am unsure of, basing my opinion on scientific evidences, peer reviewed research, and my own expert knowledge;

c) I do my best to maintain a balanced perspective instead of having a black or white vision, e.g., I weight benefits and drawbacks of each technology I comment;

d) I try my best not to slander any living person, as this is a criminal offence in Italy, therefore I cannot use swear words or certain terms even when people (e.g., criminals) deserve it. I understand this might look like I am afraid of speaking up, but this is not true.

In this issue, I will focus on the recent troubles Anthropic faced with the Pentagon, and how OpenAI fell into the same mistake.

This can tell us a lot about how AI Governance dynamics play out in the real world when powerful actors are involved.

But first, let’s take a step back to understand what happened.

Anthropic had a 200 million $ contract with the Department of War (DoW)

On 11th July, 2024, it was announced that Anthropic partnered with Palantir (a very problematic company, of which I will talk in a later issue) and Amazon Web Services to give U.S. intelligence agencies access to Claude through the Palantir’s AI Platform (AIP). The announcement, as you can see, is full of AI hype propaganda and depicts exaggerated capabilities (like “advanced risk forecasting capabilities”). (Maybe for this reason the Pentagon considered this platform to be particularly valuable?)

This version of Claude was not the regular model available to customers, but a finetuned version custom made for governmental agencies and trained on classified documents and connected with Palantir technology. But the main architecture remained the same and was still operated by the LLM model (3.0 and 3.5).

At that time, trustworthy AI was a core principle:

“We’re building AI systems to be reliable, interpretable, and steerable precisely because we recognize that in government contexts, where decisions affect millions and stakes couldn’t be higher, these qualities are essential. We believe democracies must work together to ensure AI development strengthens democratic values globally by maintaining technological leadership to protect against authoritarian misuse. […] Our commitment to responsible AI deployment, including rigorous safety testing, collaborative governance development, and strict usage policies, makes Claude uniquely suited for sensitive national security applications.”

[Source: Anthropic blog, bold is mine]

Therefore, there is no doubts that safeguards were part of the agreed Terms and Conditions (= contract) with the DoW.

In late February 2026, the DoW urged Anthropic to lift Claude’s usage restrictions

On February 19th 2026, Anthropic CEO Amodei said at the Indian AI Action Summit that he was concerned about the ethical issues caused by AI and its possible misuse.

“On the side of risks, I’m concerned about the autonomous behavior of AI models, their potential for misuse by individuals and governments, and their potential for economic displacement.”

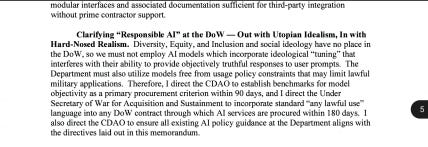

Shortly after, the Defense Secretary formally demanded that Anthropic removed all usage restrictions and grant the Pentagon access to Claude for any lawful purposes, which includes the planning of military operation and the use of Claude in autonomous lethal weapons, as clearly defined in the latest DoW AI memo issued on January 9th. This memo basically banned AI Ethics in the military, as I explained in a previous LinkedIn post.

If the company refused, the government would:

Terminate the existing contract;

Declare Anthropic as a supply-chain risk (no more governmental contracts)

Compel Anthropic to supply Claude under the Defense Production Act of 1950.

However, Amodei refused to comply, citing the fact that the technology was not ready to be deployed without restrictions and that two particular uses were contrary to the company’s values:

“[…] in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values. Some uses are also simply outside the bounds of what today’s technology can safely and reliably do. Two such use cases have never been included in our contracts with the Department of War, and we believe they should not be included now:

Mass domestic surveillance. We support the use of AI for lawful foreign intelligence and counterintelligence missions. But using these systems for mass domestic surveillance is incompatible with democratic values. AI-driven mass surveillance presents serious, novel risks to our fundamental liberties. To the extent that such surveillance is currently legal, this is only because the law has not yet caught up with the rapidly growing capabilities of AI. For example, under current law, the government can purchase detailed records of Americans’ movements, web browsing, and associations from public sources without obtaining a warrant, a practice the Intelligence Community has acknowledged raises privacy concerns and that has generated bipartisan opposition in Congress. Powerful AI makes it possible to assemble this scattered, individually innocuous data into a comprehensive picture of any person’s life—automatically and at massive scale.

Fully autonomous weapons. Partially autonomous weapons, like those used today in Ukraine, are vital to the defense of democracy. Even fully autonomous weapons (those that take humans out of the loop entirely and automate selecting and engaging targets) may prove critical for our national defense. But today, frontier AI systems are simply not reliable enough to power fully autonomous weapons. We will not knowingly provide a product that puts America’s warfighters and civilians at risk. We have offered to work directly with the Department of War on R&D to improve the reliability of these systems, but they have not accepted this offer. In addition, without proper oversight, fully autonomous weapons cannot be relied upon to exercise the critical judgment that our highly trained, professional troops exhibit every day. They need to be deployed with proper guardrails, which don’t exist today.”

[Source: Amodei’s statement]

The DoW declared Anthropic a supply-chain risk but used Claude to plan Iran strike

Following Anthropic’s refusal, the DoW maintained its promised and labeled the company as a supply chain risk, banning it from all agencies and contractors after a 6 month grace period.

And on top of that, it also declared that they would still use Claude for high-risk military operations, like they did for the strikes in Venezuela and Iran.

OpenAI jumped to replace Anthropic but immediately regretted it

Immediately after Anthropic was blacklisted, OpenAI jumped on the 200 million contract and initiated the negotiations to be the new AI supplier.

However, soon after, Altman declared that OpenAI would maintain the same guardrails and safeguards requested by Anthropic and that the deal looked “opportunistic and sloppy”.

On February 28th, OpenAI declared on X that their deal with the DoW has more guardrails than any previous contract.

In their blog, they explained that:

We think our agreement has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s. Here’s why.

We have three main red lines that guide our work with the DoW, which are generally shared by several other frontier labs:

No use of OpenAI technology for mass domestic surveillance.

No use of OpenAI technology to direct autonomous weapons systems.

No use of OpenAI technology for high-stakes automated decisions (e.g. systems such as “social credit”).

At this point, we must ask:

Why did the DoW ask Anthropic for the removal of AI safeguards from the contract?

Why did Anthropic, and later OpenAI, refused to comply?

Why did the DoW appear to have agreed to keep safeguards in OpenAI’s AI?

Before continuing with the discussion, please bear in mind that public declarations in the field of intelligence or war operations can often result to be untrue even if coming directly from the government or a trusted source. This happens because disclosing the correct information might:

Cause panic in the population;

Put national security at risk;

Discourage or scare soldiers, leading to desertion risk;

Give an advantage to foreign enemies;

Give an advantage to political adversaries or influence judges;

Alienate voters in the following elections.

Therefore, we cannot be sure about the real reasons behind the DoW declarations and actions, and we can only make educated guesses based on historical patterns and available information collected in the last years about the geopolitical situation.

To read my full analysis, choose a subscription plan below:

Keep reading with a 7-day free trial

Subscribe to Chiara Gallese, PhD to keep reading this post and get 7 days of free access to the full post archives.